!!!!!V1.0.0 Can not create stories. Just sentences that meaning are connected to your given sentence. They will bw written continuously!!!!!

This will be an AI story-writer, given a sentence he will compose a short story. Maybe he is not so acc…

!!!!!V1.0.0 Can not create stories. Just sentences that meaning are connected to your given sentence. They will bw written continuously!!!!!

This will be an AI story-writer, given a sentence he will compose a short story. Maybe he is not so accurate, but I am proud of me cause the sentences have a meaning😁😎😎🎉!!!

Made especially for other people to understand how AI and LLM’s work!!!

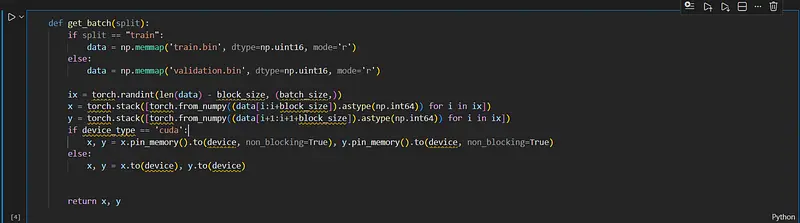

Basic lessons of theory and code on Colab.

P.S. The AI does not function so well. but he can create sentences with meaning, but not very related to each other. The punctuation is kinda good with just some small mistakes.

This is because the lack of training that I could have to my AI. My computer isn’t so strong so I couldn’t train it a lot with better learning rates and also I have the Colab free tier. 😉

You can now try my AI V1.0.0 on a real website. Look at readme. Give him a sentence without period and he will generate a little story.

Also look at demo for a portable app.