Shipped this project!

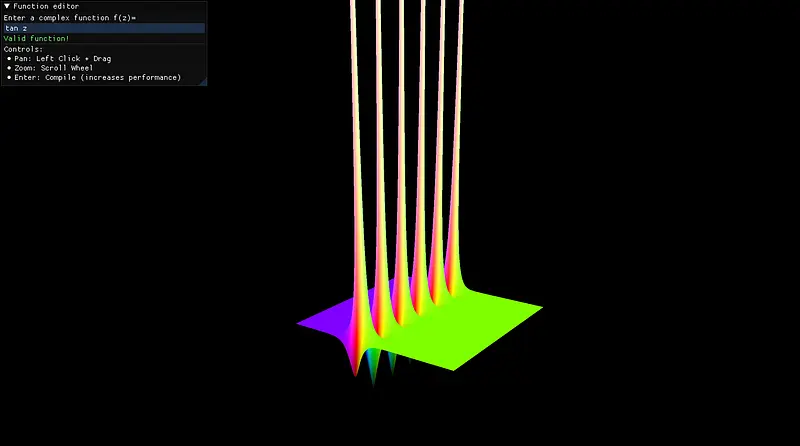

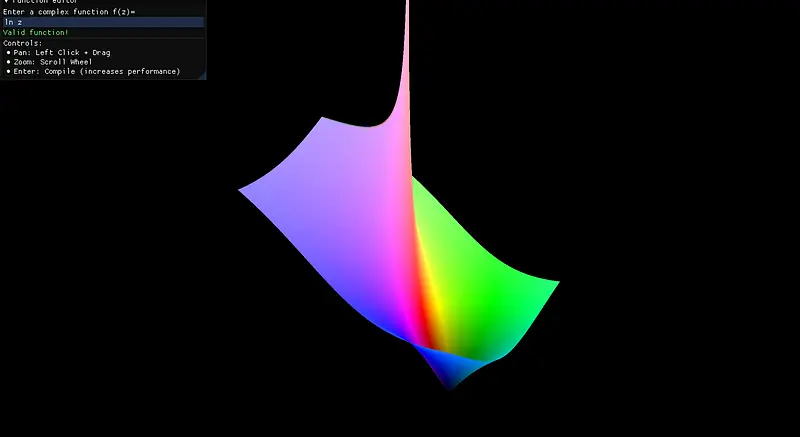

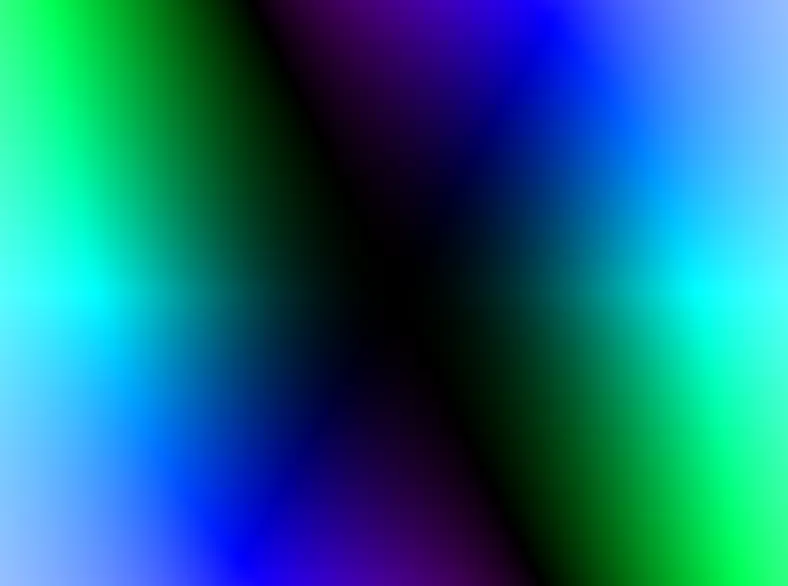

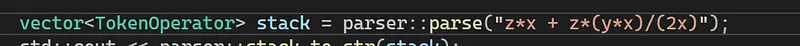

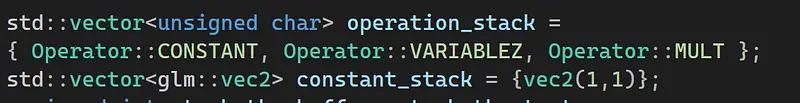

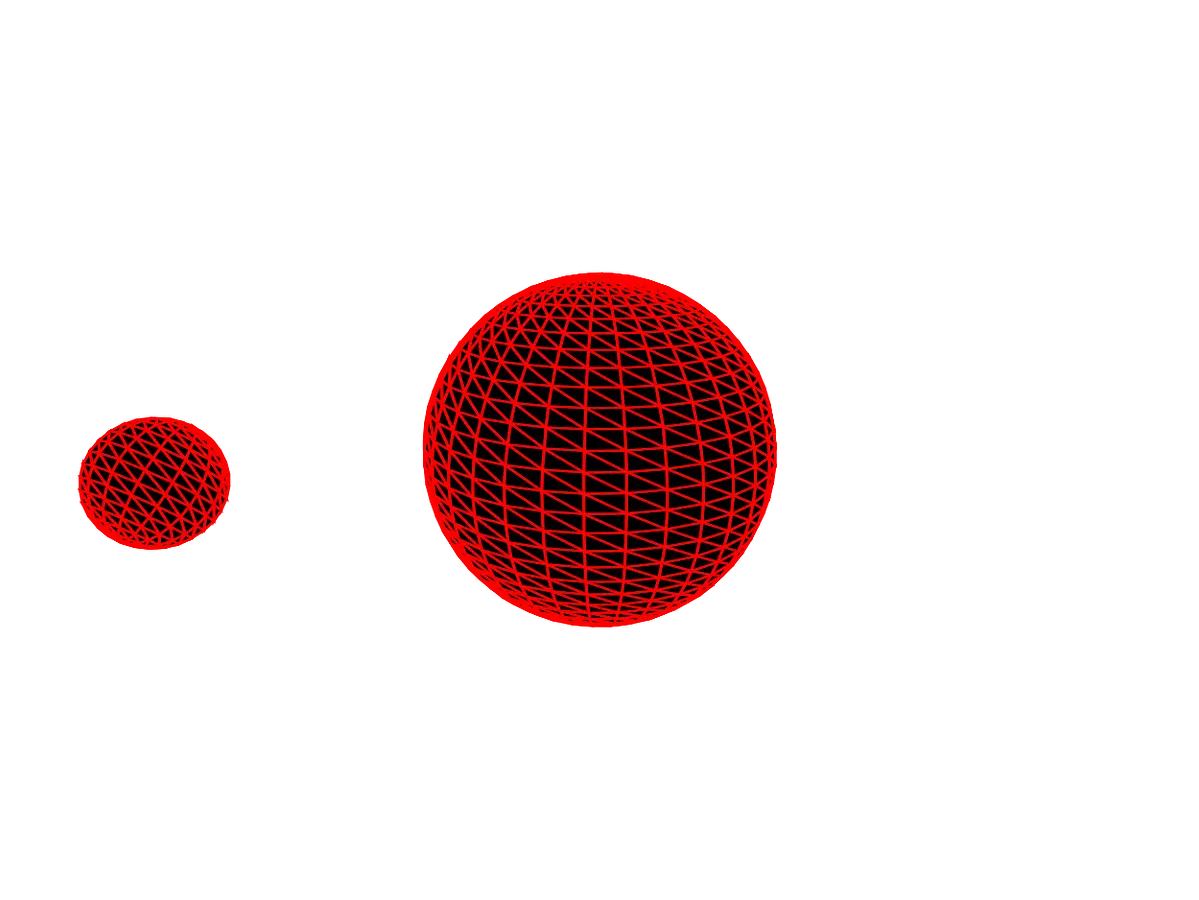

This is a complex function plotter implemented in C++ and OpenGL, ported to the web via Web Assembly and WebGL! It is implemented from scratch, with no outside dependencies, and has full support for every elementary function, and some non-elementary ones (Riemann-Zeta, Gamma and factorial). It provides supports to analytic differentiation, 3D plots with user-defined height maps, animations, and everything that might come in handy for someone working with complex analysis. It is essentially Desmos, but for complex functions!

This short re-ship’s purpose is mainly solving some issues that people pointed out, which would leave the project ultimately incomplete had they not been fixed. It was a very fun project to develop, that now, hopefully for real, I can finally put to rest!

If you don’t know the math behind this, and would like to understand it, please check out this documentation I prepared: https://github.com/Sekqies/complex-plotter/blob/main/docs/the-basics.md

Some other useful documentation I have written for this project

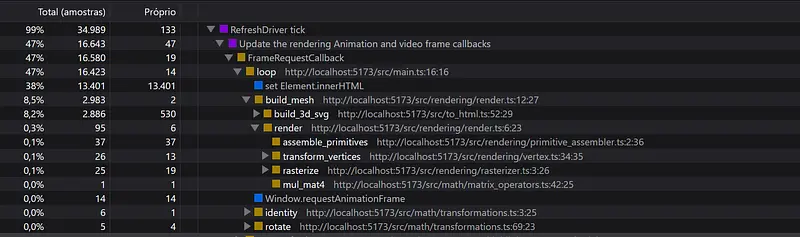

- **Optimizations: **Did you know that this is the fastest complex function plotter there is? There’s reasons for that!

- Features: A list of everything this plotter does

- Advanced: An explanation of how this works.

That’s it for now, folks! Happy plotting!

- Sekqies

.png)

.png)