A 3D graphics engine with lighting and reflections implemented from scratch in pure HTML. That is, no img and canvas tags, and no CSS.

Tagged your project as well cooked!

🔥 AVD marked your project as well cooked! As a prize for your nicely cooked project, look out for a bonus prize in the mail :)

Shipped this project!

This is a 3D software rasterizer built from scratch, implemented entirely in the CPU, and entirely in HTML! It implements the entire 3D rendering pipeline, supports custom meshes, animations, lighting, and implements its own raytracer. It is as optimized as a project like this can be! No CSS here either! All rendering is done through dynamically created <svg> tags.

I wanted to challenge myself by setting as much restrictions as possible, in order to create something I’m passionate about (in this case, 3D graphics), and this turned out to be such a great learning experience. I used to take the high level functionalities of libraries like OpenGL for granted, and now that I had to figure out to implement them (in a more restricted environment), i am so, so much more thankful for what the GPU gods have bestowed upon us.

This is a joke project turned serious after I poured far, far too much time into it. It was very fun to create, and I learned a lot in the process. Have fun with it!

- Sekqies

We are done!

All of the last additions that I felt like were needed to ship this project are already made. Most of which where UI overhauls and quality of life changes, but there is a new change that deserves to be mentioned!

I noticed in some of my displays that a light that was supposedly covered by another object still casted lights in other meshes. I initially found that weird, but it makes sense: we never did any check for collision!

The change I immediately thought of was shadow mappings. Basically, you “render” the scene under the light’s “perspective”, and when applying shading to vertices, you check if there is any other object close it (in a pre-computed buffer). This seemed like the obvious high performance decision, but once again my plans were foiled by the absence of a pixel rasterizer. Implementing shadow mappings mean rasterizing my entire (svg) scene into 2D, and holding data the size of the screen, which is, surprisingly, worse than doing per-vertex analysis.

So, I had to implement raycasting. This is obviously expensive, but I thought of some optimizations tricks to make things faster (mainly seeing if the ray intersects with the mesh’s bounding box before doing the per triangle calculations it usually does). It is still slow, but it is what it is.

I also deployed the project and changed the UI to be full screen, wrote some documentation, and got the readme up. This joke project turned out to be far bigger than I expected, so let’s treat it with some respect!

Attached, our new UI, and shading!

Commits

cf98b24: Added raytracing and shading

86497b4: UI Overhaul

a8ae8b4: Add readme

The bottom line is that we’ve got a finished UI! Lots of work were made, so I’ll keep my explanations short.

First, I wanted to add a way to allow an user to select a mesh and move it around with gizmos (the little colored arrows you see when you move an object around in, for instance, blender or gmod). To do this, we need a way for our scene to know where each object is.

To do this, we normalize the coordinates of the user’s map on the screen, turn it into a point in our 3D space, get another point very, very far back from the screen (your eyes!) and draw a line from these two points. We shift it into our camera’s coordinates system, then, we see if it intersects something.

Rather than doing this vertex for vertex, we find an ellipsoid (a 3D ellipsis, or a squashed circle) and use it do bound our object, and use this ellipsoid to check for the intersection. This makes it so a mesh with 20k+ triangles will have instantenous collision checking!

All our other changes are essentially modifications to Node and tracking of different attributes one of these might have, like attaching a light, an animation, etc. Everything being a node made developing the UI far easier than I expected, and far, far less of a headache as I antecipated.

Attached, the culmination of everything we’ve done so far!

Commits

c7d57d2: Added animations

1d1e3bf: Added point lights

694c72d: Added object importing and modularized Inspector

bfe7ba1: Finished gizmos implementation

1664107: Gizmos working with absolute coordinates

b18f978: Gizmos and state machine

b617e9e: Added ellipsoid bounding box for Nodes

Log in to leave a comment

We’ve got an UI up and running!

This part is the bane of my existence because there’s not much technical and fun to do, and its mostly a way to display (and subsequently drag the development of) an already finished project. But, unfortunately, it’s necessary. Otherwise, I’d be doing the equivalent of building a computer with no screen, no user input and output - there’s no way to know it even works!

I thought of some different ideas (making a physics engine, orbit simulation, animations, etc) but ultimately settled for just making a simple inspector where a user can create primitive shapes, move them around, add lights, etc. This is by all means not at all a complicated task, but it certainly is for a developer like me.

We’ve got some of this up and running! Not my proudest work and it’s due some changes, but it will suffice for now. I expect I will be able to ship this project soon.

The only architecturally interesting change is that now, we’re managing Nodes instead of Meshes. The difference is that Nodes carry their own model matrices, position, rotation and other relevant information for drawing, and regenerates its own matrix whenever necessary.

But I’ll let our my speak for itself: attached, our UI!

Commits

Commit 626dfa9: Added geometry normalization for imported vertices

Commit e5b2ad7: Added new primitives

Commit 85b98f5: Generalized 3d ngon creation

Commit ca18aa8: Finished basic inspector

Our graphics engine is done!

Or as done as it can be, really. A true graphics engine would have many other things: textures, multicolored meshes, fragment shaders, interpolation and many other things that are a consequence of the literal decades put into developing this area. It is, however, good enough, when you take into account the limitations that svg imposes upon us.

First things first, a careful eye might have noticed that the last rendering scene missed a certain ‘reflection’ you get from illuminating most objects. Think of the litte white spot you see in a billiard ball when it’s put against a light source - this is something that emerges naturally whenever you have a reflective surface, because it’s just a result of light reflecting on it and going directly to your eyes.

To make our rendered meshes have the same effect, we implement something called the Phong reflection model, which is the “algorithm” (or, to better put it, formulas) to calculate the light that’ll go to our eyes. This is a relatively expensive operation (involves exponentiating by 64), so I left it as an optinal feature, per object.

Second, and the reason that I’m saying that the engine is “complete”, is that we can now just read data from an .obj file and it will be rendered to the screen (after I wrote the parser for it, of course).

We can render meshes with a surprisingly high number of triangles with this method. I could, (running at around ~3 fps), render a model of the Eiffel Tower with over 400k triangles!

Attached, renders of some more complex 3D models!

Commits

Commit 9b2fdb3: Added specular lighting

Commit e91435b: Adedd obj parsing

Log in to leave a comment

And let there be light!

Simulating light is the single thing that graphics programming (and hardware!) has been developing towards in the past few decades or so. This is because, in the real world, light behaves in a very complicated manner, of which only the great GPU that runs the universe can render, so we can only ever approximate how it truly behaves. You may have heard the terms raytracing or raymarching before, and those are just that: approximations of how light behaves.

For our (very limited) graphics engine though, we have to do just the basics: get the color of vertex after being under the effect of multiple punctual lights. This is a task with many steps:

First, we have to figure out where each one of our vertices are pointing. This is called a normal, and we use to find what the intensity of the light shining on a face will have. Logically this is a factor: if you hold your phone against the sun, the side facing it will be bright, and the one facing you won’t.

Then, we have to figure out the resulting light on a vertex. This is additive: we just have to sum all the incoming lights and intensities into one. Then, we have to take into account the object’s original color for the resulting color: this is multiplicative

The final step is drawing these colors into the screen. Usually, we’d take the colored vertices for each triangle and interpolate them, but this is impossible in svg: we have to assign each face a single color. We do this by taking the average of each vertex.

This implies more memory usage to store everything needed: meshes, colors, lights, etc. So, as usual, a complete refactor of Scene to make it easier to use.

Attached: the lights!

Commits

795addc: Added lighting

b7ce605: Added normals

1c48364: Lighting almost finished

0324cd9: Lighting working!

Log in to leave a comment

We are now doing things with path and fill (and properly depth sorting)

Since we couldn’t draw anything that wasn’t either a black silhuette or a wireframe outline, the order in which things are drawn never really mattered. Now, it does.

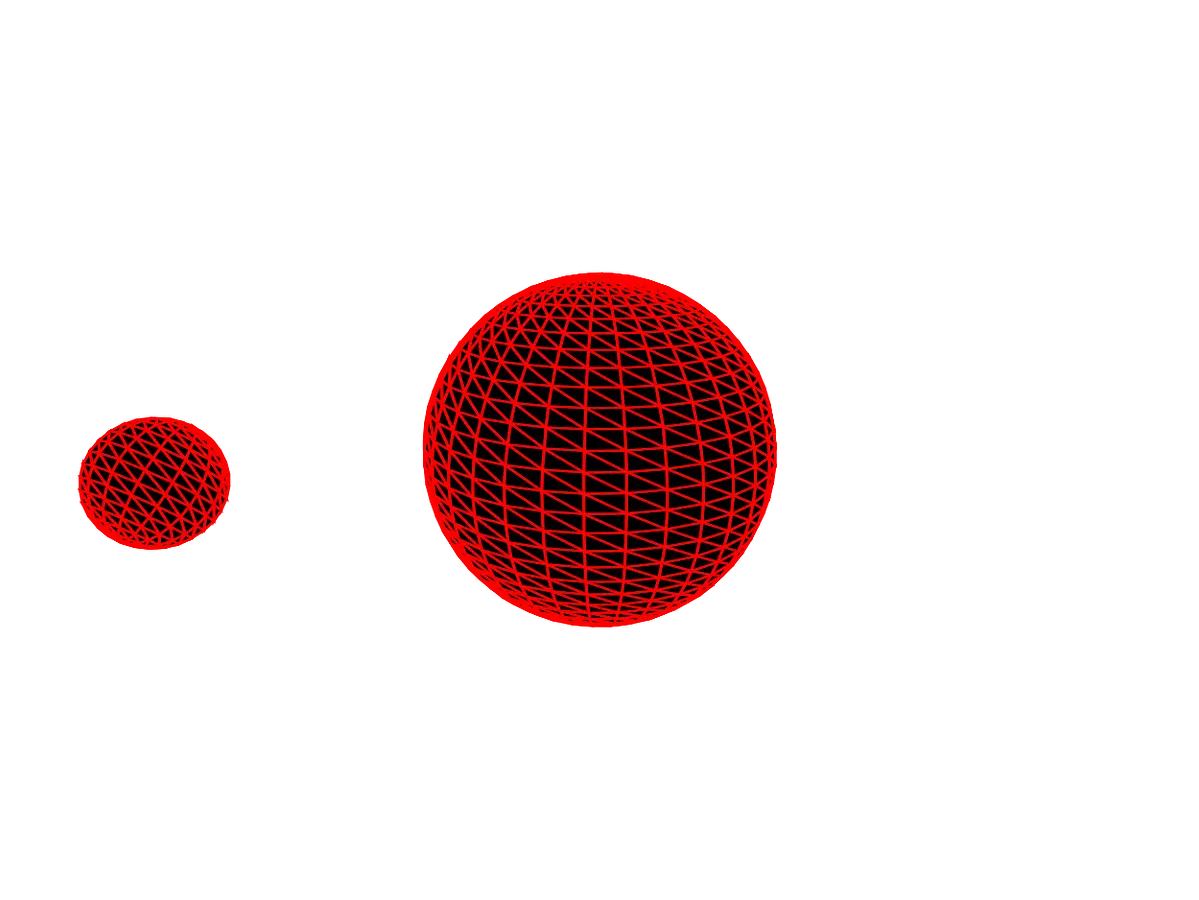

In the previous devlog, I “fixed” this by sorting triangles in such a way that what is further from the screen is drawn first, and what is closer, last. This way, if everything is behind this object, it will be covered by it. It was to my horror, however, that when I went to try rendering the two rotating spheres, that this did not work, at all.

This was due to me sorting the triangles in each mesh individually rather than as global “scene” context. In simple terms:

- What we were doing:

[3,1],[5,0] => [1,3],[0,5] => [1,3,0,5] - What we were supposed to do:

[3,1],[5,0] => [3,1,5,0] => [0,1,3,5]

At a high level, this is an obvious enough fix: just merge all meshes into one huge array, and sort it. But it comes with a huge problem (for a memory nut, like me): we’ll be doubling the memory we allocate.

Thankfully, Javascript kindly provides us with the subarray method, that gives us a “view”, or a reference, to a memory chunk of a larger array. Consequentially, we can create a large buffer with all the data we’ll need, and give each mesh a chunk of it.

In our program, we call that large buffer a Scene.

After doing all of this, I went to render the two overlapping spheres and… it didn’t work? I quickly learned that this was due to the way that <path> works in SVG: if we want depth, we need different DOM objects, rather than a single, large one. It is bad for performance, but ultimately necessary.

With all this, we got our vertex engine done! Attached, lots of triangles!

Commits

Commit eaaa1ea: Created Scene object

Commit 06e157a: Finished Scene logic

Commit df1a013: Depth working!

Log in to leave a comment

We got some new optimizations, one of which provides a visual effect. Also, news!

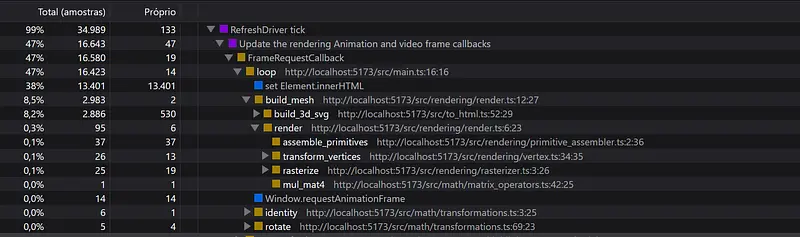

First of all, getting the purely optimizations things out of the way: we’re stepping out of manually manipulating strings. I noticed that in my old benchmark, 8.2% of the time was being used in build_3d_svg. At first i found this to be weird, since this function is purely string manipulation, but, as it turns out, this is exactly the issue. String reallocation is expensive, and since they aren’t mutable in Javascript, I can’t just edit a pre-existing string.

The solution to this is representing the strings as what they should be, character arrays. This involved the creation of a new helper class, StringBuffer, that represents strings as an uInt8 typed array, and the necessary helper functions to convert strings and numbers into this byte array method.

Another thing: I thought I wouldn’t be able to use the stroke and fill attributes of svg because i thought this project could also be submitted to the #flavorless challenge. As it turns out, it isn’t. So I get this freedom which, in turn, means I’ll get to actually color my 3D meshes.

If you’ve done 3D rendering in OpenGL before, you’ll likely be familiar with the GL_DEPTH_TESTING that you enable so two meshes in different z positions don’t merge together. This is a nice bonus that comes pre-made, but, as with everything in our project, we had to implement ourselves through a technique called Painter’s Algorithm. Usually, this is done through a technique called z-buffering, but this doesn’t work for our svg based project, because it requires you to have pixels (while the only thing we have here are vertices).

And, one last optimization: backface culling. We simply don’t draw vertices facing away from the camera.

Commits

Commit f10e698: Optimized string writing to use fixed-size buffer

Commit 07573cd: Depth sorting & Backface culling

Log in to leave a comment

No new features for you! This time developing was dedicated almost entirely to refactoring our render pipeline so it’s easier to use.

A graphics engine is pretty general-use, for once I have one up and running, I’m free to do essentially whatever I feel like. The problem is the quick graphics system I built yesterday is kind of bad.

When I was writing my last devlog, I wanted to make a quick demo scene to show the rendering engine up and running. In doing so, I realized that the way I had structured my data pipeline was kind of all over the place, and required me to keep track of a bunch different buffers, that I was cloning and moving every draw frame. I took a quick look at my performance graph, and found that 6% of usage was in the rasterize function, which allocated memory every time a new frame was called.

We can’t be having that. So, I decided to refactor my system to use a Mesh structure that holds three distinct buffers: our vertices, those vertices after being transformed, and them after being rasterized. This way, I allocate memory once, and modify these buffers at runtime, whenever needed.

This required to rewrite every step of my rendering pipeline, including most of our matrix math, to mutate instead of copy and return. This is particularly annoying, because javascript passes object by reference (good!) but you can’t reassign them (bad), and it doesn’t throw a warning if you try to.

Anyways, everything is now done! This leaves us free to try to further optimize the <svg>, or pivot into actually doing anything with our engine.

Attached, the new performance results! Note how the memory stays constant after the start.

Obs: I thought this would be a quick refactor, and didn’t plan ahead. No issues nor modularization of commits in this one :(

Commits

Commit 5d1a342: Refactor to use mesh system

Log in to leave a comment

We can render scenes in 3D now!

Wait, what? 3D? I thought this was a project about mapping images into <table> objects? Well, it turns out that this is boring, and far too slow.

It’s boring because it’s just a matter of converting a grid of pixel colors to a grid of table cells (which was already achieved in under 2 hours), and it’s slow because the DOM struggles to render more than ~10k elements at a time.

This led me to remembering that the <svg> tag is allowed, and it lets me draw lines from one point to another natively. With this immense power in hands, I fell into any graphics programmer’s mid-life crisis: trying to implement a graphics engine by myself. Except I’m doing this in HTML instead of actually using the GPU. Fun!

To do this, we have some steps: getting vertices in 3D, applying transformations to them (translation, rotation), connecting the vertices as triangles and drawing them. Drawing them is what we’re actually doing with the <svg> tag.

Since we aren’t allowed to use attributes like fill or stroke, the <path> object fails us because it will default to a black fill (so we can’t properly see the object). So, to see outlines, we create multiple rectangles and rotate them around to make lines.

Attached, our 3D engine! Notice the speed difference between the two methods.

Commits

Commit f33ffc2: Matrix operators and transformations

Commit 33a65b3: Quaternions

Commit cf7d636: Vector transformations

Commit 401d716: Primitive assembler & Rasterizer

Commit 34c8b94: Finished 3D rendering

Issues

Issue #1: Add 3D Rendering with <svg> and its subissues:

- Issue #2: Processing and transforming verticse

- Issue #3: Connecting vertices

- Issue #4: Drawing vertices

- Issue #5: Matrix math

Log in to leave a comment

To start, let me explain what this project is about: we want to render images without the <img> and <canvas> tags, and without CSS. Because it’s hilarious to do so.

The immediate idea that came to mind is transforming an W x H image into a correspoding W x H <table> element, where each <td> is a 1x1 pixel (or larger, if we want to do make-believe responsivity). A little research showed that this was technically possible, and that’s good enough.

Basically, we just have to set the cellpadding and cellspacing properties of the table element to 0, and all of its td elements with whatever we want, and boom: we’ve got an image!

Now, of course this method will have terrible performance (the DOM wasn’t made for rendering thousands of elements, and all drawing is done in the CPU), so we have to do our best to make it usable. My solution, for the time being, is creating the table as a string, and then sending it to the DOM to be parsed and rendered only once. There are alternatives, but we’re scraping pennies: actually drawing this to the DOM is the main timesink.

On a sidenote, I forgot how much I disliked doing javascript. Starting out I didn’t want to use node or any other “build system”, since the project is simple enough, but using raw javascript is a drag (no types, it’s hard to link modules to the main file, lots of issue with WebGPU support in vscode, etc). So we’re over-engineering this and using node and typescript. I’m all for bad UI, but I’m a little sensitive about my developer experience.

Attached, an old friend, rendered with this method in a 300x300 table.

(Obs: About an hour of work time was lost because I was doing the project in the wrong git repository)

Commits

Commit 49a515b: Proof of concept done

Commit bd885d1: Proof of concept with image data

Log in to leave a comment

.png)