This project was developed with significant assistance from AI tools, which generated a large portion of the code (frontend), while I was responsible for the overall idea, architecture, integration, testing, and final decisions.

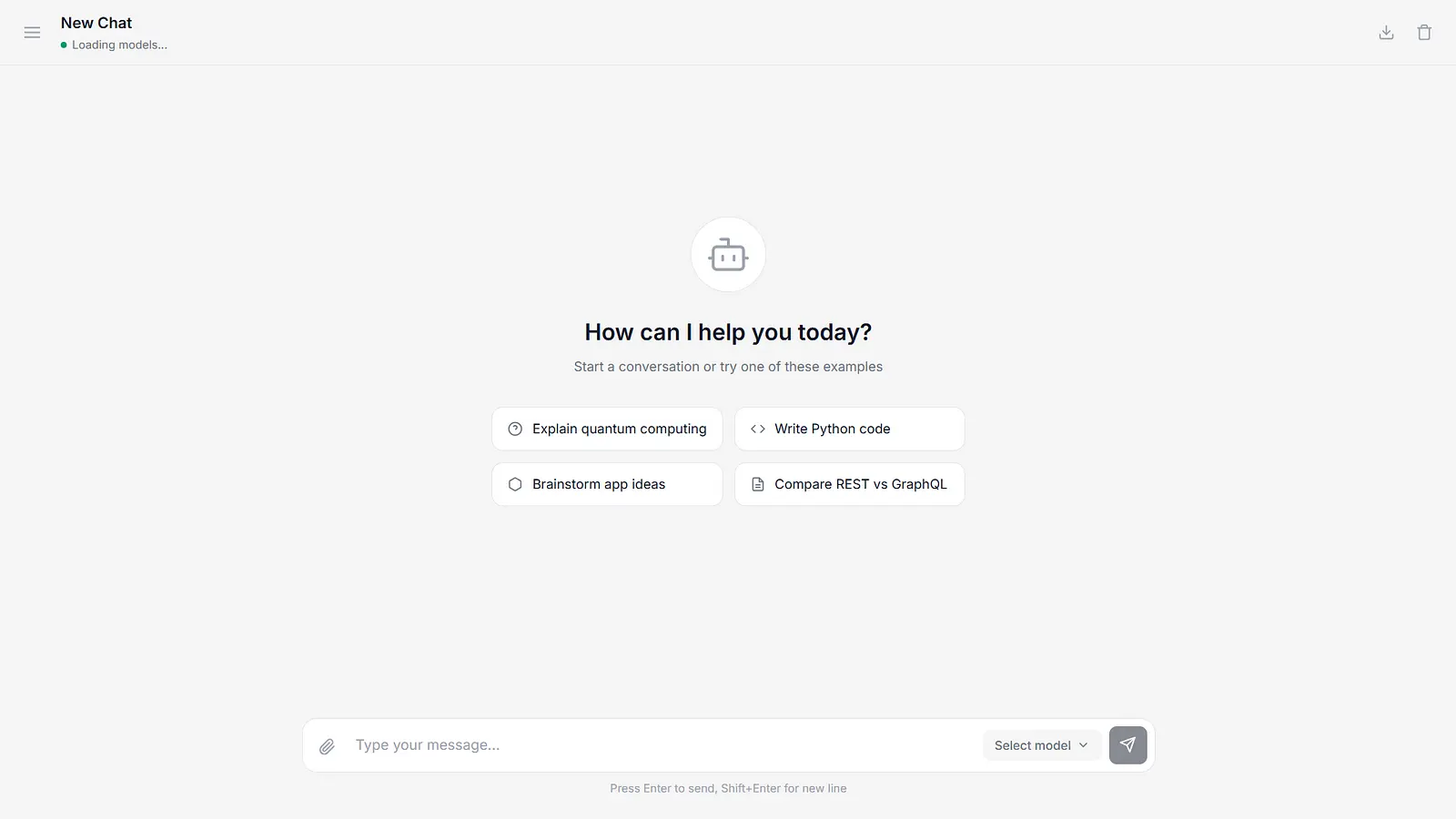

I added key features like persistent chat history, chat renaming and deletion, model selection, Markdown rendering, response regeneration, and media popups. The app now runs fully in the browser and connects to AI models through a proxy, so you can try it out without needing your own API key.

👉 Try the demo: https://mini-llm.pages.dev

Log in to leave a comment

Shipped this project!

I built Mini-LLM, a fully client-side web interface for interacting with LLMs.

It runs entirely in the browser — no backend, no accounts, everything is stored locally.

I deployed a live demo and focused on keeping the architecture minimal and transparent.

I’m building Mini-LLM, a lightweight web client for running and interacting with LLMs directly in the browser.

No backend, no accounts — everything runs locally using LocalStorage.

Live demo: https://mini-llm.pages.dev