Shipped this project!

I built OmniLab—an interactive, Iron Man-inspired 3D Heads-Up Display (HUD) controlled by hand gestures and AI vision.

The hardest part wasn’t the initial code, but the deployment battle over the last two weeks. My original idea was to run everything 100% locally for zero latency and just submit a video demo, but that wasn’t accepted. I tried migrating the backend to Render and Railway, but the reviewers (shipwrights) rejected them because those platforms don’t guarantee “forever free” stable hosting, even though my credits covered well beyond the Flavortown deadline. On top of that, I fought a brutal war against Cloudflare and Google CAPTCHAs trying to make my Playwright stealth agent work on datacenter IPs. The workarounds worked sometimes, but they were ultimately too unstable.

So, I pivoted. Instead of fighting endless hosting and CAPTCHA rules, I rebuilt the project base. I implemented MediaPipe Tasks for Web to handle vision directly in the browser and focused on massively improving the interface. I added new tactical gesture controls (Swipe, Thumbs Up, Fist), optimized the 3D HUD, and created a flawless “Demo Mode”. I’m extremely proud of how I adapted to the constraints and delivered a highly optimized, futuristic command center!

with how this

with how this  version turned out! :)

version turned out! :)

and shipped the terminal workflow.

and shipped the terminal workflow.

) (TabNews, Devpost, GitHub) Developed a litlle part during bus commutes

) (TabNews, Devpost, GitHub) Developed a litlle part during bus commutes  , it solves fragmented info retrieval. I overcame Discord’s 3s

, it solves fragmented info retrieval. I overcame Discord’s 3s  limit using async deferring and hardened persistence. Solo project by EngThi. Ready for production with Docker

limit using async deferring and hardened persistence. Solo project by EngThi. Ready for production with Docker

It’s coming to an end

It’s coming to an end  )us to create a true edge engineering directly from an A05s.

)us to create a true edge engineering directly from an A05s.

. The goal is to make sure the DEMO doesn’t break if a user doesn’t have a JWT token

. The goal is to make sure the DEMO doesn’t break if a user doesn’t have a JWT token  . I’m building a fallback mechanism so the

. I’m building a fallback mechanism so the

. I discovered that the system wasn’t updating the .env file and had cached or something like that the old URL, causing this problem…

. I discovered that the system wasn’t updating the .env file and had cached or something like that the old URL, causing this problem… , but that was all it was.

, but that was all it was.

Nest which already helps a lot, but depending on how many projects and things you need to host, it might not be enough… Thank God I recently created my AWS

Nest which already helps a lot, but depending on how many projects and things you need to host, it might not be enough… Thank God I recently created my AWS  account and managed to create VPSs to deploy my projects. I still have some free trial dollars

account and managed to create VPSs to deploy my projects. I still have some free trial dollars  . I think it’s enough for 20 days or more. I didn’t mention it, but their dashboard and resource sections are very intuitive and easy to use. I liked it a lot!

. I think it’s enough for 20 days or more. I didn’t mention it, but their dashboard and resource sections are very intuitive and easy to use. I liked it a lot!

doesn’t lose track of the artifacts when the background worker picks up the heavy renders. Hardening

doesn’t lose track of the artifacts when the background worker picks up the heavy renders. Hardening  the persistence layer so can poll the status properly.

the persistence layer so can poll the status properly.

the reality is I was drafting the

the reality is I was drafting the  how to pass the NotebookLM facts into the Gemini script generator.

how to pass the NotebookLM facts into the Gemini script generator.

Just making this post to get calm now. The war against captchas and cloud restrictions almost burned me out, but

Just making this post to get calm now. The war against captchas and cloud restrictions almost burned me out, but  the system is finally stabilizing. The architecture is Hardened

the system is finally stabilizing. The architecture is Hardened

The Vision: Almost Done

The Vision: Almost Done

can extract the exact facts we need without timing out the server. IT’S IN THE IMAGE IS REAL, AND YOU CAN PRATICE THESE THINGS TO SEE GOOD RESULTS. At least for me it will… and helped-me a lot)

can extract the exact facts we need without timing out the server. IT’S IN THE IMAGE IS REAL, AND YOU CAN PRATICE THESE THINGS TO SEE GOOD RESULTS. At least for me it will… and helped-me a lot)

Debug Session: The Permission Bottleneck

Debug Session: The Permission Bottleneck

The Struggle: Hotkey V vs Manifest V3

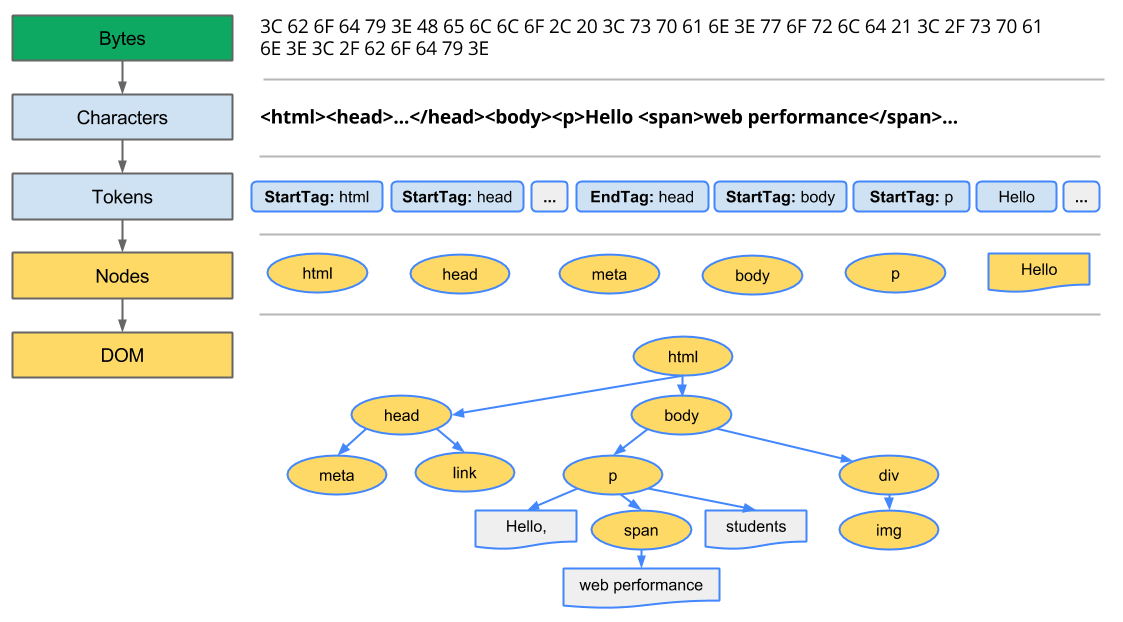

The Struggle: Hotkey V vs Manifest V3 with the DOM tree crashing before the event listener finishes. I’m tearing apart the background and content scripts to figure out a safe fallback to make this Resilient.

with the DOM tree crashing before the event listener finishes. I’m tearing apart the background and content scripts to figure out a safe fallback to make this Resilient.

right now. I’m hitting the endpoints manually

right now. I’m hitting the endpoints manually  to ensure the payloads match the expected JSON structure before the orchestrator fully takes over.

to ensure the payloads match the expected JSON structure before the orchestrator fully takes over.

… Good thing I’m studying Computer Engineering. The visual pipeline is throwing syntax errors, so I’m prompting the CLI to find the exact bottlenecks before we scale.

… Good thing I’m studying Computer Engineering. The visual pipeline is throwing syntax errors, so I’m prompting the CLI to find the exact bottlenecks before we scale.

Hotkey V Finally Hardened

Hotkey V Finally Hardened

and planning our final moves. I was mapping out exactly what needs to be refactored in the

and planning our final moves. I was mapping out exactly what needs to be refactored in the

I’m really proud of how the

I’m really proud of how the  explaining exactly what I missed (at least that’s what I thought)

explaining exactly what I missed (at least that’s what I thought)  and set it as a terminal-style background, took a screenshot of the AstroLab menu

and set it as a terminal-style background, took a screenshot of the AstroLab menu  and put it there. I edited the Mac terminal app (I think any macOS product has it)

and put it there. I edited the Mac terminal app (I think any macOS product has it)  that’s in the background. I wrote the text and that’ and tried to blur the CLI image and that was it.

that’s in the background. I wrote the text and that’ and tried to blur the CLI image and that was it.

, have a

, have a

while testing so much!

while testing so much! I found this sticker of Clash King and wanted to use this

I found this sticker of Clash King and wanted to use this

lab logic. By hardening this bridge now, we ensure the frontend won’t break when we fully plug in the heavy video generation later.

lab logic. By hardening this bridge now, we ensure the frontend won’t break when we fully plug in the heavy video generation later.

. It prints a nice rich table showing the Python version, OS

. It prints a nice rich table showing the Python version, OS

, and whether the API keys and local storage (~/.astrolab/) are correctly detected.

, and whether the API keys and local storage (~/.astrolab/) are correctly detected.

, Notes

, Notes  ) a deep, opaque background to ensure legibility against any wallpaper.

) a deep, opaque background to ensure legibility against any wallpaper.

and the user is the day of the week.

and the user is the day of the week.

and took up too much space. In v1.5.2, I’ve completely reimagined the interaction model.

and took up too much space. In v1.5.2, I’ve completely reimagined the interaction model.

bars pulsing to your voice. No more wondering if the mic is picking you up—the extension now “breathes” with you.

bars pulsing to your voice. No more wondering if the mic is picking you up—the extension now “breathes” with you.

connection (phone, mouse, etc.) before the browser

connection (phone, mouse, etc.) before the browser  so the dashboard stays alive during field testing.

so the dashboard stays alive during field testing.

);

);

on Windows where

on Windows where  couldn’t find the

couldn’t find the

) active, every pixel of space matters.

) active, every pixel of space matters.

)

)