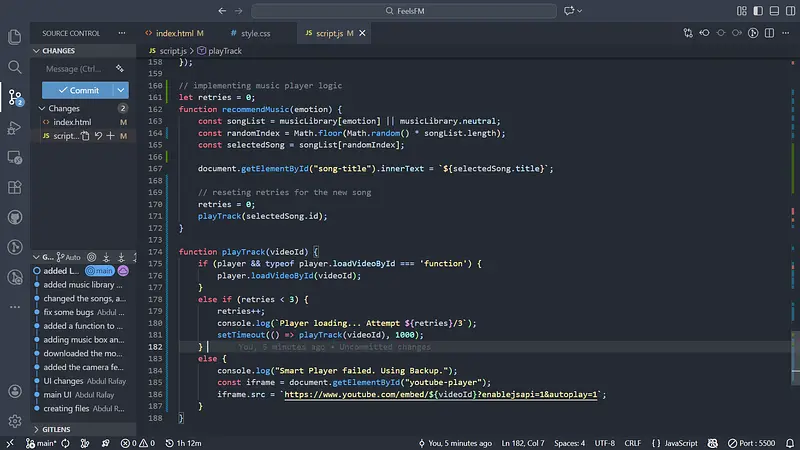

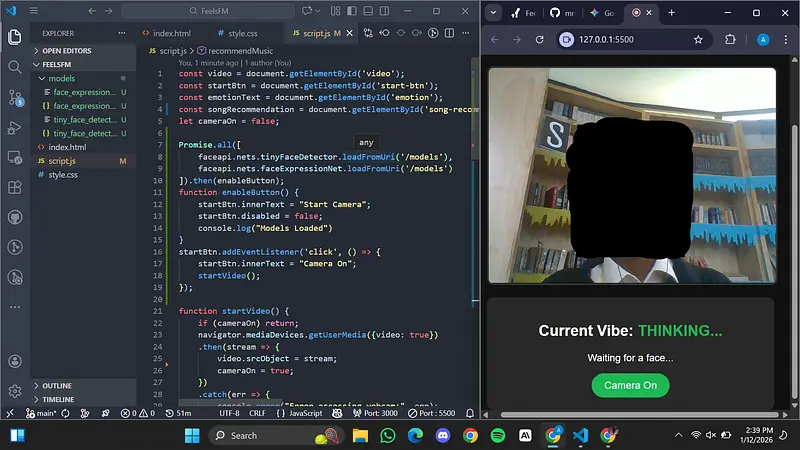

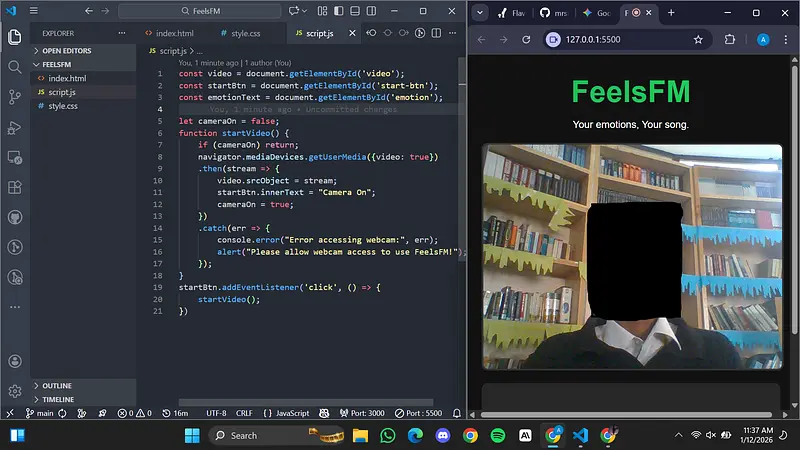

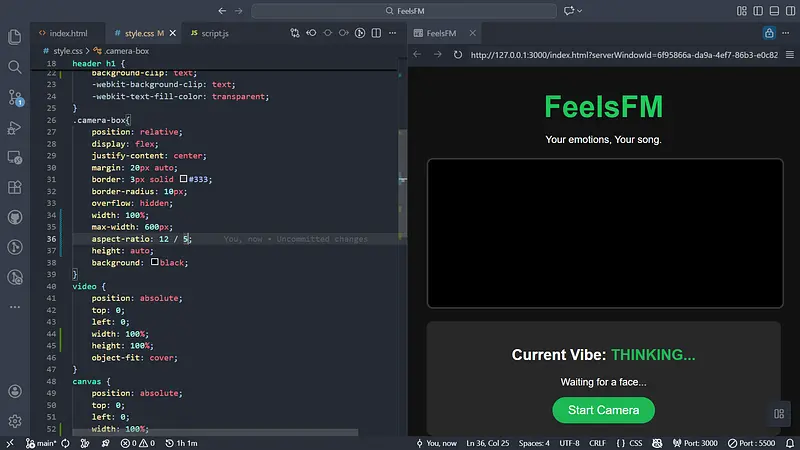

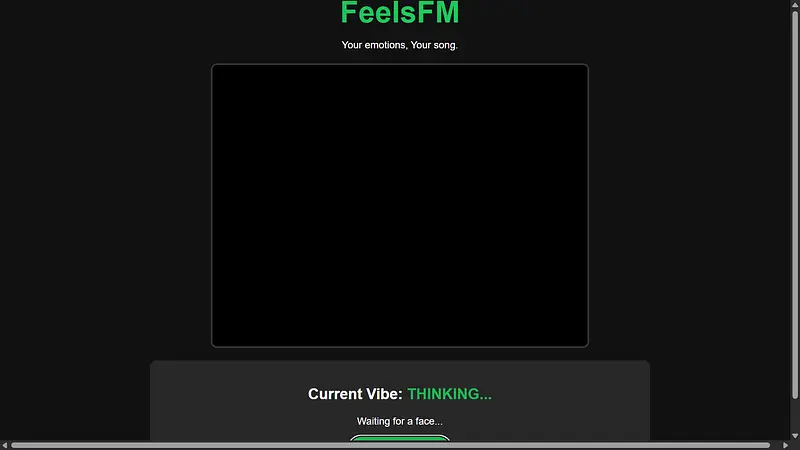

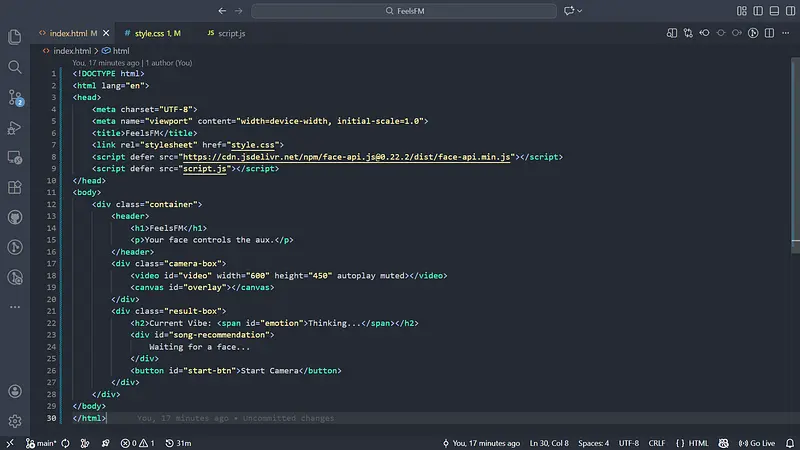

Up until today, FeelsFM had the memory of a goldfish—if you refreshed the page, your music history was gone. Today, I fixed that.

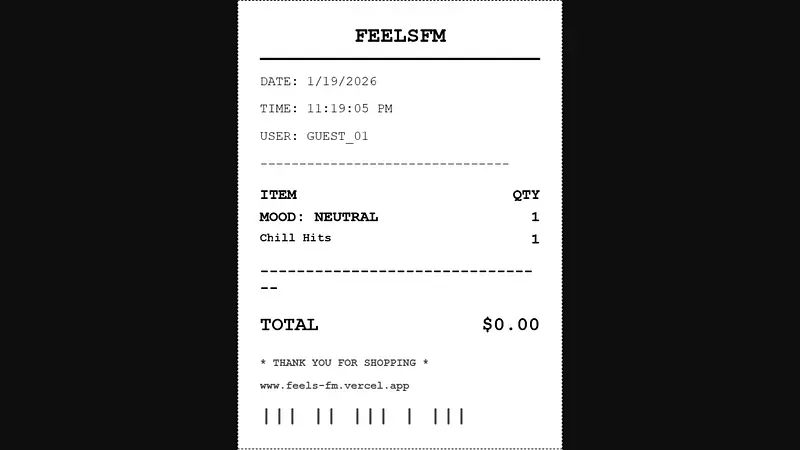

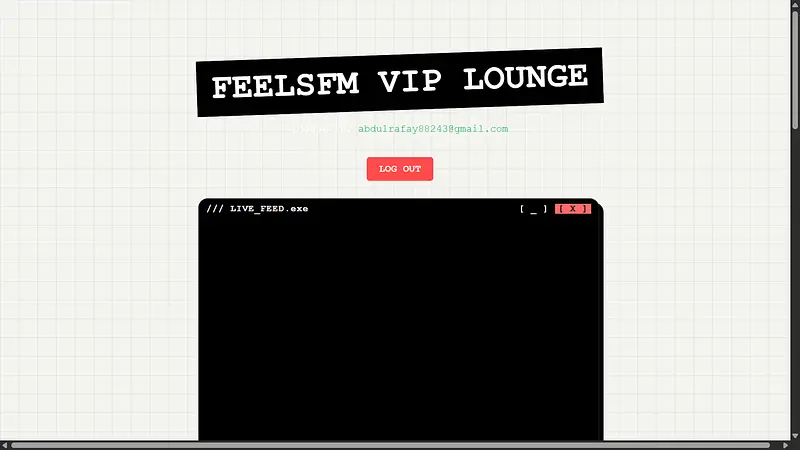

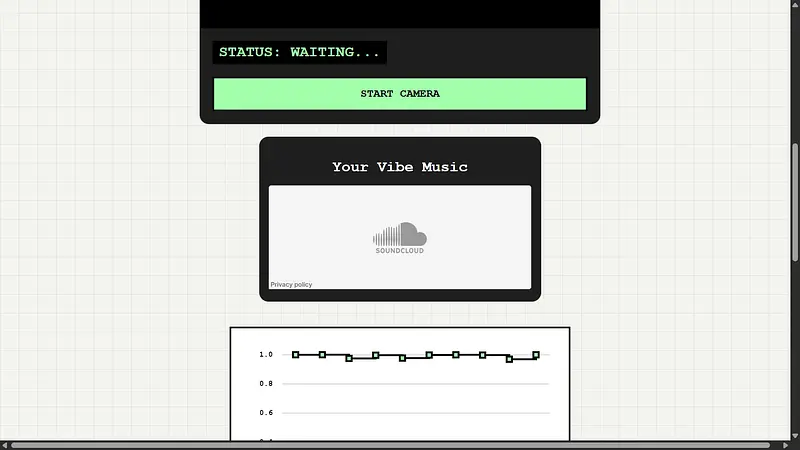

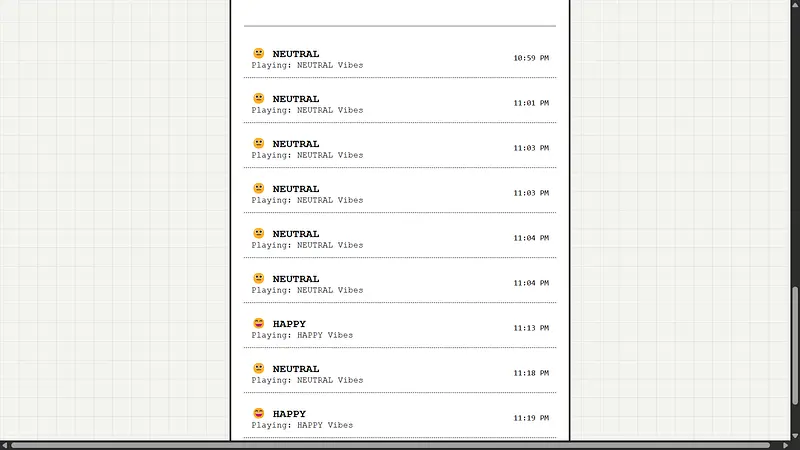

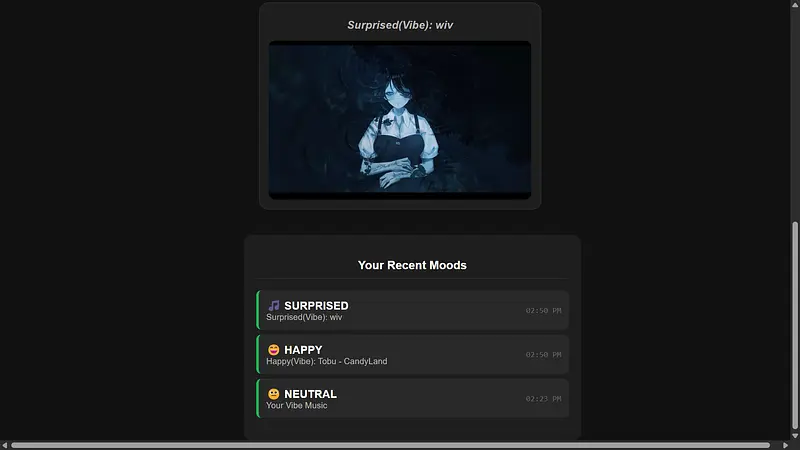

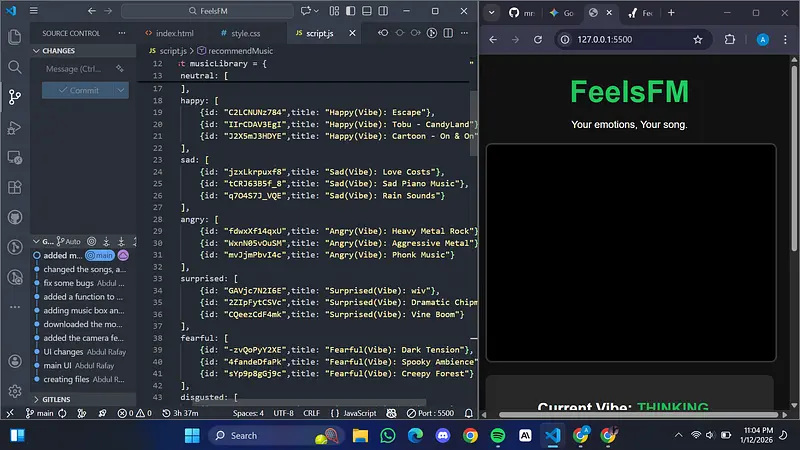

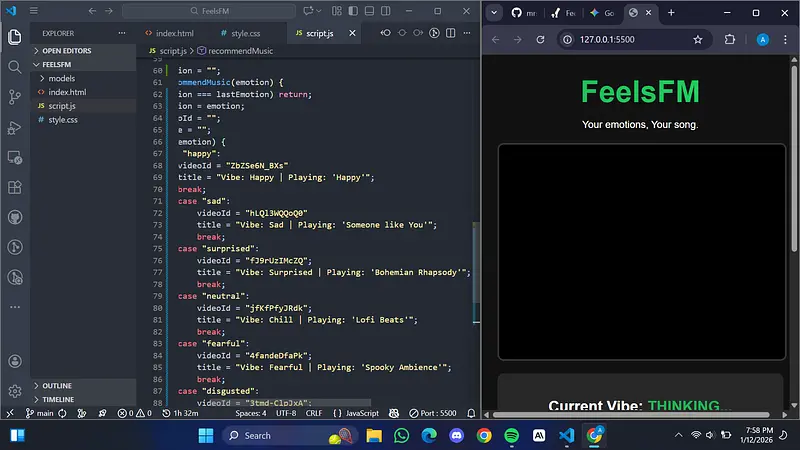

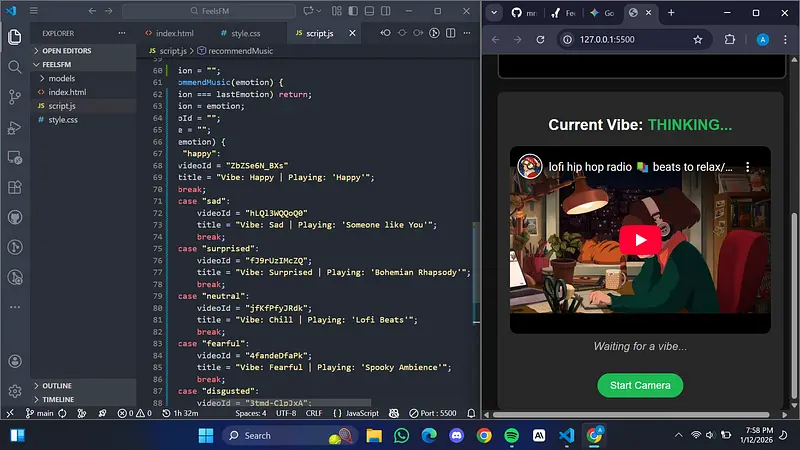

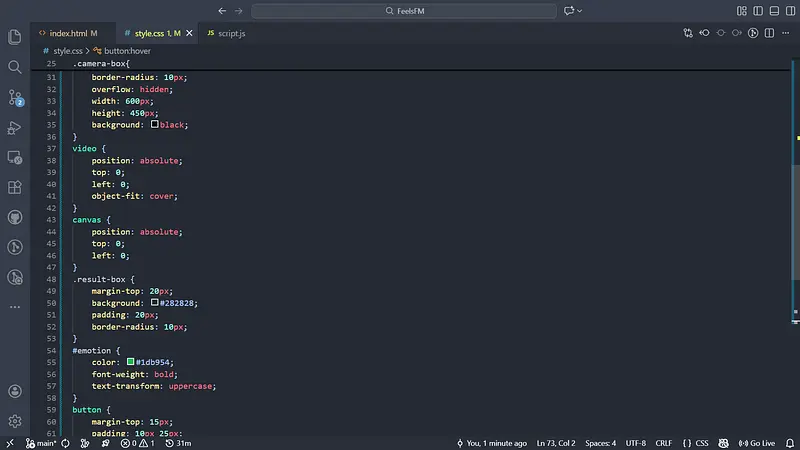

I spent the day integrating Supabase, giving the app a permanent database. Now, every time you scan your face, the app saves your emotion, the song it recommended, and the intensity of the feeling.

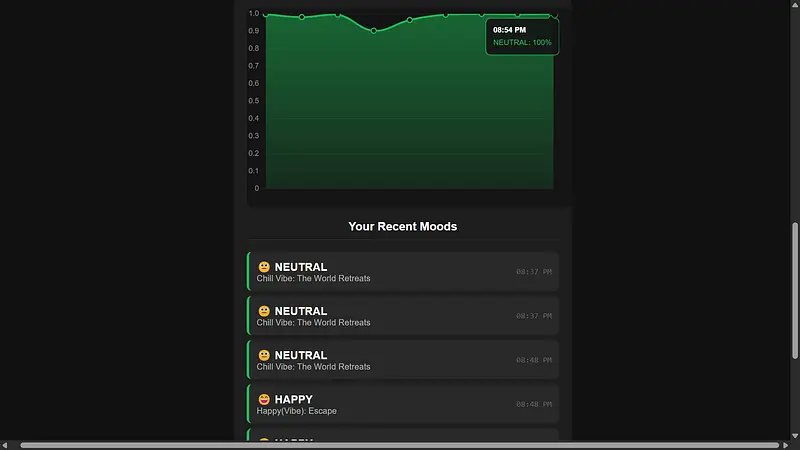

To make this data actually useful, I built a Vibe Trend Dashboard. It uses a line graph to show how your mood intensity fluctuates. I spent a lot of time polishing the UI—getting the tooltips to glow green and show the exact mood percentage (e.g., “Neutral: 99%”) took some tricky JavaScript configuration, but it looks awesome now.

It’s amazing to see the data actually populate in real-time. Can’t wait to ship the live link soon!