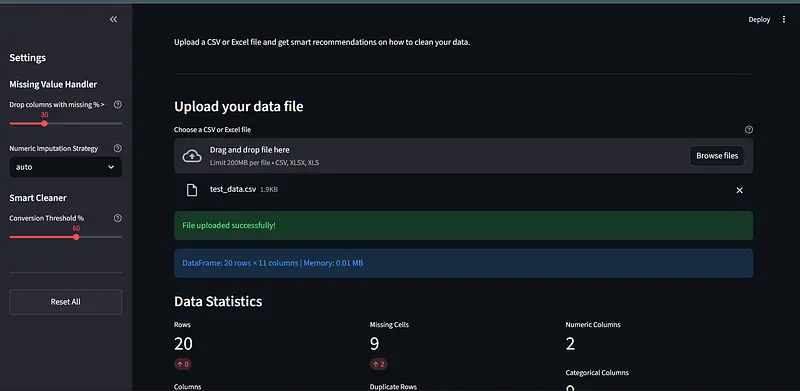

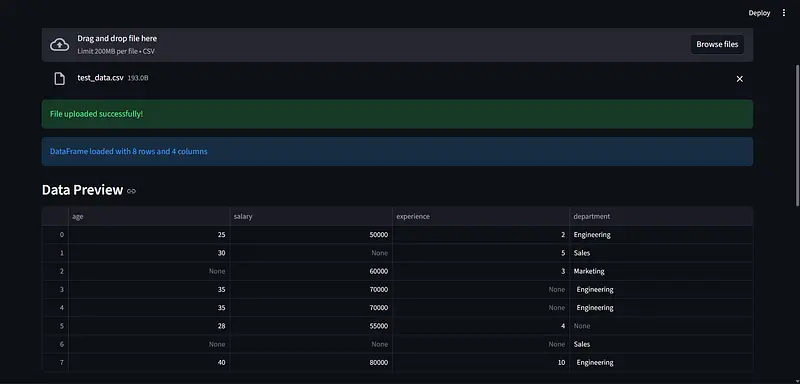

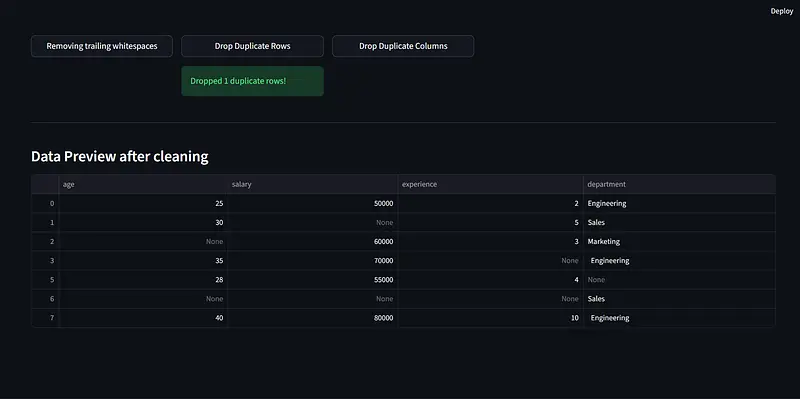

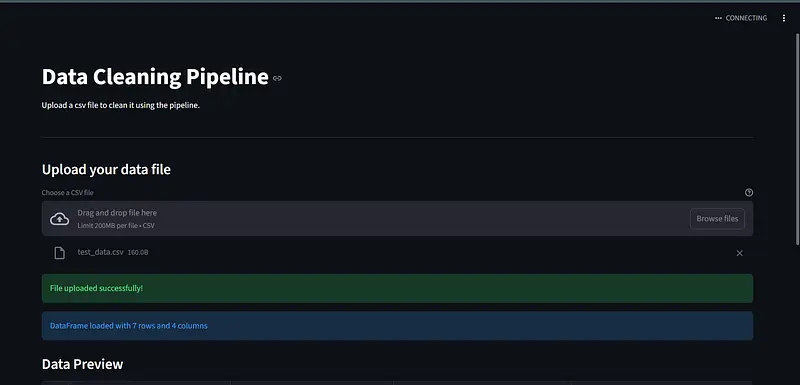

Data Cleaning Pipeline where users will upload their csv or excel and the app will scan it, tell you what is wrong, and let you fix it. You can let the AI handle everything automatically or go through each issue yourself and decide exactly which c…

Data Cleaning Pipeline where users will upload their csv or excel and the app will scan it, tell you what is wrong, and let you fix it. You can let the AI handle everything automatically or go through each issue yourself and decide exactly which columns to touch!!!

Built it for data analysts, researchers, students (ME), and anyone who’s ever wasted hours cleaning a spreadsheet before they could even start their actual work.

use ai for debugging of streamlit UI issues!

use ai for writing the prompt used to send to gemini for AI assistant,AI overview and AI guide

Used ai for testing files in testing folder