An efficient C++ NNUE UCI 0x88 chess engine, currently sits at 2014 ELO

Hacktime had an issue with time tracking and this time was “resynced”, i was asked by a shipwright to devlog so that it can “sync” later

In the meantime here is a chess game my engine played :3

Log in to leave a comment

Shipped this project!

In this re-ship i implemented and trained a NNUE on ~30 million games, which sounds like a lot but isn’t and the engine currently is pretty random and makes some blunders sometimes on positions he wasn’t trained on, but he’s still good and the blunders are mostly depth 4+ rather than free queens

NNUE Works!

After a lot of hours spent training and making a neural network, NNUE to be specific, and writing a basic trainer and implementing accumulators and other NNUE features, i finally can say that my engine is now NNUE based!

The NNUE was trained on 30 Million positions, which is very light compared to other toy engines which usually have networks trained on 5+ Billion positions or more which makes them really strong, but sadly i don’t have the computational capacity to train at that level, i trained the network on my own engine’s self-play at depths 6-10, which was insanely slow!, but i can’t complain now that i have a working, and actually pretty good NNUE!

Next step is implementing output buckets and having multiple layers instead of just one hidden layer which was kind of a bottle-neck, and ultimately training the NNUE on +1 Billion games which should make us capable of reaching 2500 ELO!

Bellow is a quiet game won against a friend who’s quite good at chess! (shotout @BlueCheckmate), i expected the engine to be more aggressive (or random?) after the addition of the not so well trained NNUE but it did quite good against him!

Shipped this project!

I built a kinda efficient chess engine, there is still a lot of work to be done to increase its ELO and making it faster, for now it sits at 2014 ELO (this was determined using SPRT test), i faced a lot of bugs when making this and implementing chess was not fun at all, training the neural network was kinda satisfying tho!

Training the neural network + other cool optimizations!

I let a neural network train for ~7 hours, although now i realized there were some things i should’ve done first before letting it train :3

Also i did some bug fixes, and i added some search optimizations like Killer Heuristic and History Heuristic which made a 20-30% reduction on middle game search time!

And also some other small optimizations like getting rid of the O(nlogn) std::sort and doing pseudo-bucket sort instead (sorting move order for the beta-cutoffs!)

Now that i have a trained network, the only thing left is plugging it to the engine!!! we staying up tonight reading theory fr

Bellow is a quick game won against a friend, he’s rated FIDE 2200 :3

Log in to leave a comment

Road to NNUE!

I successfully implemented MLPs! short for Multi-Layer Perceptron, it took a lot of math bashing and going back on my calculus notes, all whats left now to make a working NNUE evaluation function is to train the MLP on some 2500+ lichess games and make some optimizations to the architecture so that it’s fast enough for a chess engine!

NNUEs are an edit made to Multi-Layer Perceptrons that makes them very efficient and usable as an evaluation function for chess engines, NNUEs are quite recent (introduced in 2018) and using them alongside 0x88 and MTD(f) is quite funny if you ask me! basically a Ford model A with a jet engine strapped to it

bellow is an image of the MLP learning sin function, and also a game won against a friend of mine who’s rated 2100 in lichess!

Log in to leave a comment

Even better! + Blog

Anass here with another 10 hours devlog, i did a lot of stuff this time, technical details are at the new blog!, i also wrote a FEN parser, which is basically a board representation format, it was kinda of a headache tbf, also i implemented basic time management with “Iterative Deepening”, which is a very weird slow-looking thing that uses DFS to act as BFS, and i implemented “Transposition Table” which is basically storing the boards so that we dont re-calculate Minimax for the same board multiple times! this was necessary to make MTD(f) fast enough for practical use!

What’s done till now:

✅ Move generation

✅ Basic evaluation function

✅ Minimax and Quiescence search

✅ UCI integration

✅ Time management + Iterative deepening

✅ A FEN parser

✅ Transposition table

✅ MTD(f) implementation

⏳ Implement and train a MLP on Lichess elite database

⏳ Implement NNUE

Bellow is an honestly weird win against Stockfish level 6, which is believed to be 2000!

Log in to leave a comment

Getting better + blog

I did a lot of bug fixes! turns out the reason why the engine kept making stupid moves is actually not of Horizon Effect but since en passant and castling both were corrupted, now it’s all fixed! :P

also i did some optimizations regarding in minimax, it should now prune better! i wrote a small blog about this section of the engine, i’ll add more stuff soon since i WILL have to improve the search by A LOT if i wanna get to +2000 (perhaps even switching from minimax!)

next move is to make a NNUE to evaluate boards instead of the hand-made evaluation function, here i leave you with a game our engine has won against Stockfish Level 5 which is supposed to be 1900 ELO

Log in to leave a comment

I implemented Quiescence search so that the engine doesn’t get stuck in Horizon Effect and keep making stupid sacrifices, also i had to battle a LOT of bugs, i really should change my coding style!

bellow is a small game played and won against Stockfish level 4 which has approximately 1700 elo according to google, so we’re improving!

Log in to leave a comment

Complete rewrite + Blog!

The code i wrote was indeed TOO CRAPPY (check last devlog) it was impossible to continue without a complete rewrite, it really gets to that point!

for reference, before the rewrite the engine was at 6K nodes/sec, but after rewrite it got to 1.9M nodes/sec, a 350x factor increase!

in the rewrite i used 0x88 board representation, which was surprisingly nice to use, also i used a table to generalize moves except for pawn (invented my own technique lol)

also i made a small blog about how i made this in slightly more detail if you’re interested, i’ll continue writing these each time i wanna make major improvements, it really helps to know exactly what you should do!

starting from now there is a lot of room to improve this engine and for it to reach a respectable high elo, expect some 2000+ wins in the next devlog!

Made it Bug-Less! (and faster!)

during the past 2 days i wrote a lot of crappy code, like, A LOT OF CRAPPY CODE, which was so crappy a lot of it was straight-out incorrect, so i had to spend a lot of time debugging and playing against it in Cute-Chess and Lichess in order to know where the issues were at, also i made some slight optimizations like better move ordering and less memory usage, also i finally deployed it to Lichess! if the bot is offline for some reason shoot me a message on slack and i’ll revive it!

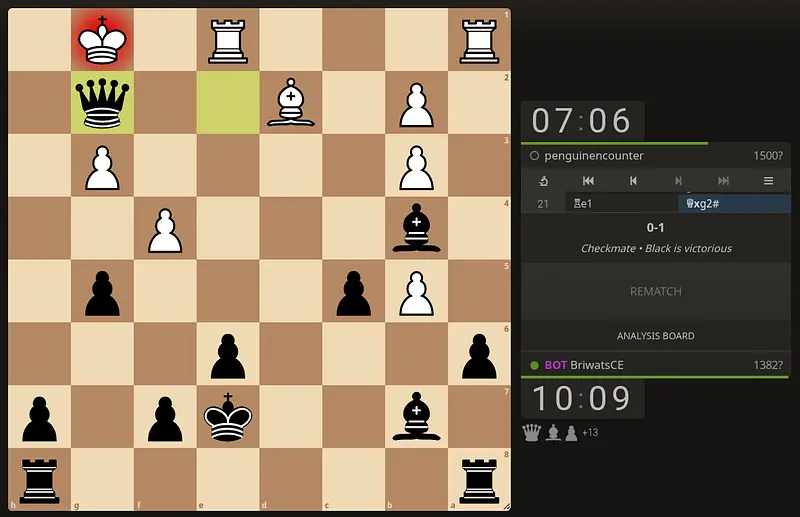

Special thanks to @penguinencounter and @tom for helping me test the engine, bellow is an image of @penguinencounter’s game with it!

Made it visual!

I implemented UCI which made it easy to visualize the chess engine better and hopefully make it easier to debug! also i had to rewrite some main functions in order to optimize the engine further, it still struggles with depths like 6 and 7 and barely holds 1s for depth 5, so getting it to depth 7 under 1s and being bug-less should be the shipping goal!

what was done:

-

Optimize move generation (

domove/undomoveinstead of storing boards!) -

Implement

UCImove notation -

Implement

UCIand hook it up withCute-ChessandLichess -

Remove Board from being global and pass by reference instead

Log in to leave a comment

Made the chess engine functional!

I’ve finished the longest part which is move generation, for now the engine fully traverses depth 5 in an average of 1.24s for initial positions even with alpha-beta prunning

next step is implementing UCI and optimizing move generation and perhaps attempt Monto-carlo search and other non-bruteforce methods, and also train a NNUE to evaluate boards instead of the hand-made evaluation function

Log in to leave a comment

.gif)

.gif)

.gif)